SeeSpace InAir promises a whole new way of interacting with your television. Using an inline HDMI dongle, they hope to enhance your favorite programming with additional information from the web. It’s definitely a cool idea if done well, and the hardware technology is there, but the real question is where this extra content is going to come from.

Hardware

The InAir’s hardware is pretty straight forward. This little dongle sits between your cable box and TV, and when powered by a USB cable, it will overlay all kinds of cool information on top of your regularly scheduled programming. Google’s Chromecast offers HD internet streaming to a small dongle and retails for a piddling $35; certainly there are a number of solutions that SeeSpace could be using to make this device work.

A careful perusal of their campaign however showed no mention of HDCP. HDCP or “High-bandwidth Digital Content Protection” is a form of hardware DRM that is built into a number of television screens and digital media players. It’s designed to encrypt the data leaving a media player to prevent hardware from recording it and making unauthorized copies. The only way to make sense of this data is to license the HDCP technology that allows you to decrypt this signal. Most major television manufacturers have done this as there is no way to digitally record information presented on a television screen, but the more a device looks like a video recorder, the harder it is to get certified.

In a comment, they stated that it will be HDCP compliant:

But they haven’t gotten certified yet. A whitepaper on the topic had this to say about compliance requirements:

Licensed technology Adopters are required to meet content protection requirements. For example, the HDCP License Agreement prohibits high-definition digital video sources from transmitting protected content to non-HDCP enabled receivers, and such devices from making copies of decrypted content.

If their content recognition algorithm requires any video frames to be transmitted over the internet and stored, they may not qualify for HDCP compliance. What this means for the consumer is that certain cable boxes that require HDCP will not work with the InAir. If your cable box (or any future cable box) requires HDCP, I would ask SeeSpace to confirm their certification before backing.

Software

The real issues are with the software. The features demonstrated show a very cool TV viewing experience. Take for example this race overlay:

Sure, baseball and football broadcasts will contain a little counter in the corner that gives you all that you need to know, but there’s a lot more data to display for a race. This system allows the user to have access to a large amount of information as it unfolds.

The big question is who is providing this information? The InAir description mentions that this display is a “port” of the existing Formula 1 app, so the Formula 1 app people are supplying it in this case. This app is supported by the F1 organization, so they can stream information from a race that may not make it to the TV broadcast.

What SeeSpace doesn’t mention is that the app costs $14 and that it is currently only available for a few mobile devices. My first thought is that they might be running the app in some sort of heavily modified Android OS, but the integration is just too good for that to be the case. For example, the app does allow a 3D view of the course, but it looks more like a Google maps satellite view than the sleek 3D map shown. The map which precisely matches the theme of the InAir’s user interface.

In order to produce a screen like this, SeeSpace would need to have their own custom application that interacts with the Formula 1 app’s backend. Building this would require access to the app’s source code or at least some kind of API which would require some kind of partnering with the developers as it is not an open-source app. After contacting the F1 app people regarding such a relationship, I got this response:

No we have not provided them anything. It looks like they have created

a mash up of various things including our assets. Whilst this is not

technically legal in the UK, its a mash up, so probably fair use in US

law.The biggest challenge will be commercial viability for a very small platform.

This directly contradicts what SeeSpace has said:

Strange that they could port it without the source code or anything else from the F1 app team. There’s a very good possibility that they are lying about porting this app. It’s likely a simulation of what the final product could do, not a demonstration of what it does.

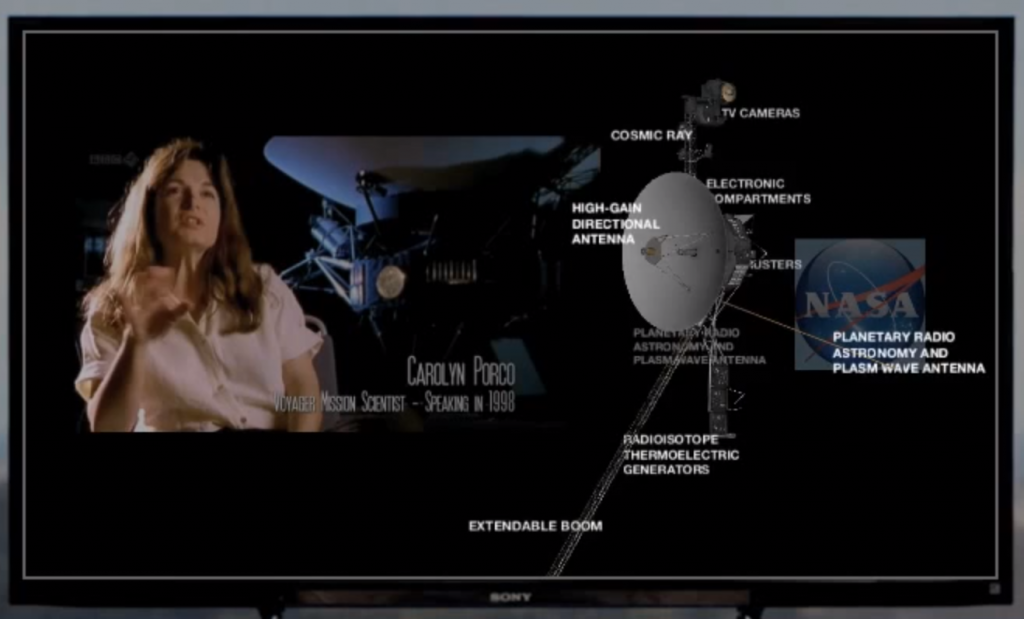

What about television content that doesn’t even have an associated app? They demonstrate a rather elaborate set of features for enhancing a documentary on space exploration:

The video in this case appears to be from the BBC documentary series “The Planets” which was released in 1999. A standard-definition version is actually available on Netflix if you’re interested:

I just finished watching it myself, and it was excellent.

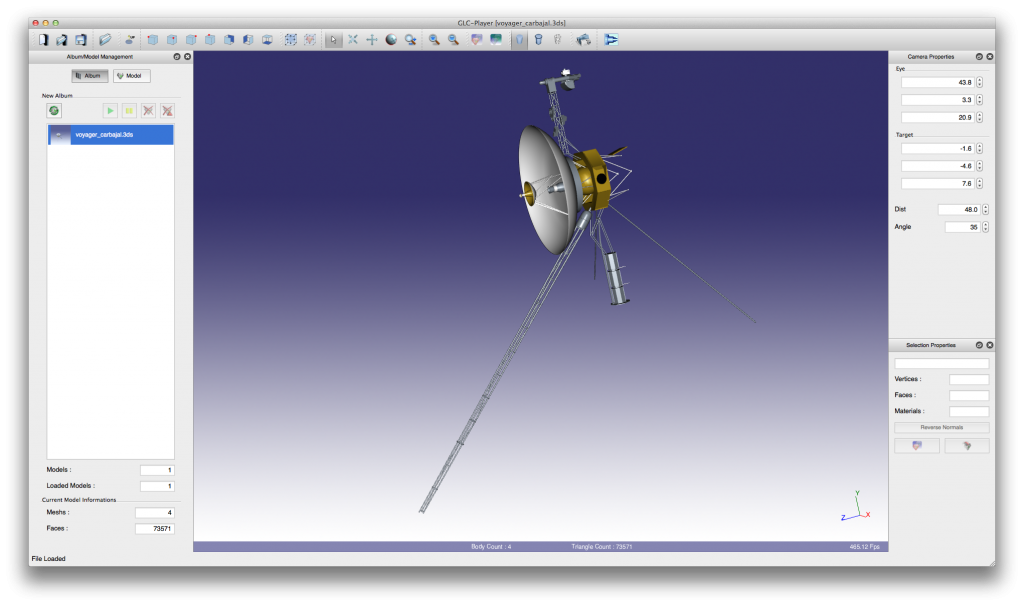

The video demonstrated on the InAir overlays this documentary with a 3D model of the Voyager probe that it supposedly found on the internet all by itself. I found that a search of “Voyager probe 3d model” brought me to a NASA page which contained several 3D models of space probes. I found two different models of Voyager: one in .3DS format and one in .blend:

Both of these models required special software to view.

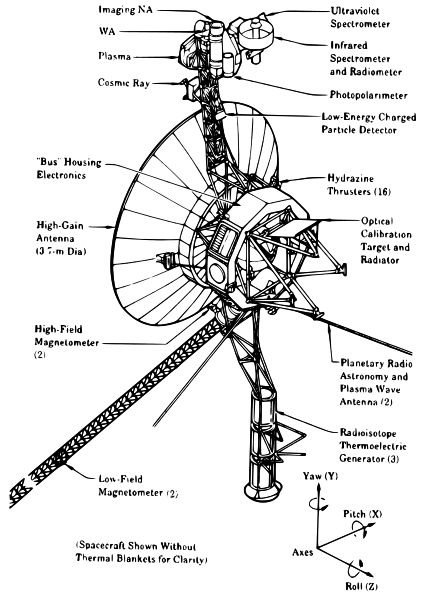

It looks like they’re using the Blender model in the video (based on some of the detail and texturing not present on the 3DS model). This model looks great, but it lacks the labels shown in the video. For that level of information, I needed to find a diagram such as this one on Wikipedia:

Which seems to agree more or less with the labeling the InAir showed.

So what you have here is a sort of “book report” being assembled automatically about the content shown on the screen. This kind of information is very useful for someone wanting to learn more about space exploration, and it would certainly enhance any viewing experience.

The only problem is there’s very little explanation for how the InAir is doing it. They offer the following:

This example shows the possibilities of projecting 3D models into the room to navigate and explore. It also demonstrates the way InAiR can access open web information such as Wikipedia and other relevant sites on the Internet. In this example it’s the NASA website and its library of photographs.

Okay, so let’s talk about the number of things that have to go right for this particular overlay to work:

- The software needs to identify that the documentary is currently talking about the Voyager probe. According to their FAQ, they’re using “channel guide, tele-text, and voice layer to understand the context of what you’re watching based on keywords.” We all know how reliable that can be. I’m not certain that the TV guide data would even contain the word “Voyager”, so they would need to pick it (and its context) out of the subtitled broadcast with the program (if available) or use voice-recognition which is extremely challenging if there is any background noise/music coming from the source.

- To find information on this space probe, the InAir would need to search the internet and somehow know to filter out any results related to the Star Trek television series of the same name.

- Assuming the InAir somehow understood automatically that the Voyager probe is a physical object, it would need to search for a 3D model and download it. There is more than one model available, so it would have to arbitrarily pick one of them. The file is zipped, so after unzipping, it would need to identify the Voyager .blend file from the PNG texture files and find a suitable way to open it. There are dozens of different 3D model formats and even more still image formats. It would need a port of a Blender and 3DS Max viewer. It would also need to figure out how to orient the file. The .blend file had the probe tilted 90 degrees from how it was shown in their demo.

- After opening the file, it would need to identify the parts of the probe on that 3D model and cross reference it with a number 2D diagrams found elsewhere on the internet. This is an incredibly difficult machine vision problem to solve especially considering that many of the 2D diagrams online are line drawings that bear little resemblance to the 3D model (from a computer’s point of view).

- Unless you want to see a spinning “loading wheel” all of this needs to happen nearly instantaneously. The device will also have no time to prepare if you flip to a particular channel and immediately start looking for augmented content.

In short, there is a lot of difficult work that this machine has to do to present the information shown. Google does this kind of work with some of their more general search results:

This is the kind of information that InAir is trying to show, but even Google, the multi-billion dollar company whose mission statement is “to organize the world’s information and make it universally accessible and useful.”, can only display a few images, a summary ripped from Wikipedia, and a few dates that are probably also ripped from Wikipedia. The level of detail shown in the automated results on the InAir overlay is eons beyond what even Google has accomplished. If they can really accomplish something this sophisticated, they shouldn’t be selling a TV dongle. They should be selling this algorithm to universities.

The only way I can see something like this being even remotely plausible is if a human or team of humans is somehow involved in curating the content displayed; there is no way a computer today can automatically provide the information shown while also filtering out all of the other noise on the internet.

Perhaps the content providers will do it themselves? Their FAQ states:

What I can tell you is that content providers are interested, but I can’t talk specifically about their interests.

Uh huh… I’m more inclined to believe the F1 guys. The platform is too small to be profitable.

Can they do it?

When you realize how many television programs there are out there, you might start to realize why this is such a staggeringly difficult problem to solve unless it’s totally automated. SeeSpace is sweeping all of those important issues under the carpet and focusing instead on gee-whizzing people with a fancy user interface. Even in their “Risks and Challenges” section, they talk entirely about manufacturing concerns without mentioning this back-end at all.

The only time they address the algorithmic side of things is here:

We have a patented InAiR content recognition engine, based on our own algorithms, which intelligently identifies relevant Internet and social content with what the viewer is watching and delivers it to the TV screen in real time.

I found a few relevant patents from Dale Herigstad involving video delivery, but none of them mentioned any special algorithms for content recognition or data acquisition. I left a question in their user comments section asking for a patent number, but they appear to be ignoring me.

They’re also responding to legitimate backer technical questions with an unsettlingly coy attitude. When one backer was discouraged by the lack of Video on Demand support (Netflix, etc), SeeSpace responded with:

They never mentioned Video on Demand in their project description; it only comes up in their FAQ which isn’t even linked from the Kickstarter page. It’s not a dumb question, but they’re treating it like it is.

Their vague reply and disparate information almost makes it sound like their product spec hasn’t really been nailed down yet. Makes one wonder how much they’re comfortable changing when it comes to actually shipping. If Netflix is a major feature, advertise it and stand by it. Don’t make off-hand remarks in your comment section.

Without more information on how the automation system works, I have to conclude that this device simply will not function as advertised and that the demonstrations shown so far are forgeries created with specially hand-curated content that is not attainable with an automated system or sustainable with a human one. Sure, there may be certain programs like Formula 1 racing that work (assuming they actually do some day get access to the app’s source code), but for every-day use? Forget about it.

The Voyager probe had some of the most sophisticated data collection equipment available at the time, but it still needed humans to interpret what it found.

The overlay on HDCP-protected content could be implemented using bunnie’s NeTV hack: “the attack enables forging of video data without decrypting original video data, so executing the attack does not constitute copyright circumvention.” But good luck getting it past compliance.

(although all their examples show the video being rescaled before their overlays, which would necessarily require decrypting the original video…)

I don’t see what they do would be a cause for concern regarding HDCP. I have a Onkyo receiver that HDCP compliant and did lots of overlaying: @4:27

http://www.youtube.com/watch?v=-xmBtZtPrto

That receiver isn’t sending any information about what you are watching over the internet and is physically incapable of doing so. If InAir can get HDCP certified, they can do it just like Onkyo is, but if their device looks too much like a video capture device (capturing frames from video and transmitting information from them over the internet), they may not get certified.

So what they could do is to send samples of a pixel of a frame instead of a stream. If I remember correctly, HDCP does allow you to buffer decrypted video for image processing, so if they are smart, they can do what I’ve just said and can get it approved! Hopefully they will credit me for the idea! An inair would be nice, I can do a lot with that if they have an open API!

I mean “a few pixels” not one!

I agreed with your point on Voyager, it must be showing a special made 2nd screen app, like f1, not something fully automated! Regardless, I still think the interface is cool and it’s probably make a lot of sense if Samsung and LG build it in their smart tv!

Regarding HDCP, it’s not such a big deal. There are many pre-certified HDMI RX and HDMI TX chips (even on DigiKey!) with built-in HDCP keys, which output parallel RGB data. Infact, majority of the HDMI chipsets now come preloaded with HDCP keys and the manufacturer doesn’t need to do anything to support HDCP. So at least that part is very easy and cheap, since they will need RGB data to overlay their stuff anyway, so receiving end is OK, and it’s likely the SoC they are/will be using will have HDMI out (also with HDCP most likely), so output is solved as well.

Adding the obligatory “probably useless in Europe” warning. Cable TV is broadcast over DVB-C standard, so there’s no special proprietary set-top boxes provided by cable networks. All you get is a standard aerial input and possibly a smartcard reader for encrypted channels. Not sure if there’s a huge market for external DVB-C adapters any more, *especially* ones that have HDMI output, when DVB-T/C functionality is already built into the new televisions. People generally tend to hate random set-top boxes and messing with their cables.

And yeah, getting the augmented data feeds is going to be painful. If they actually did provide DVB support, that would actually help things, because that way, they’d actually know what channels you’re watching and what shows are currently on (from EPG). They can’t easily deduce that from already decoded and converted HDMI signal, can they?

That said, I like the idea that you could add “smart TV” type of stuff to *any* TV via an add-on box. I sometimes wish I just had Android running on my television, for example.

My computer warning to open your site. Are you sure it is viruses free?

Amazingly, some people are posting Kickstarter Comments to say they’ve received a SeeSpace InAir. Less amazingly, the augmented content isn’t as smart as the pitch video suggested. It would be nice if some of the tech sites that breathlessly reported on the campaign launch would now find a unit and subject it to proper review. Short of that, here’s the most detailed comment from someone who got it working:

“Got it here in Canada. Customs fees were $17ish so not bad. Connected and updated the firmware, with about 10 minutes everything was working well using my Xbox and watching Netflix. The image was noticeably darker, and there doesn’t seem to be a whole lot of content other than posting to Facebook and Twitter. You can ‘listen’ and it will display info on music being played etc, but no show details at all.”